or it might change their assessment of the patient – consciously or unconsciously.

Let’s say I’m doing a study on a medical pill designed to reduce high blood pressure. I know which of my patients are having the expensive new blood pressure pill, and which are having the placebo. One of the people on the swanky new blood pressure pills comes in and has a blood pressure reading that is way off the scale, much higher than I would have expected, especially since they’re on this expensive new drug. So I recheck their blood pressure, ‘just to make sure I didn’t make a mistake’. The next result is more normal, so I write that one down, and ignore the high one.

Blood pressure readings are an inexact technique, like ECG interpretation, X-ray interpretation, pain scores, and many other measurements that are routinely used in clinical trials. I go for lunch, entirely unaware that I am calmly and quietly polluting the data, destroying the study, producing inaccurate evidence, and therefore, ultimately, killing people (because our greatest mistake would be to forget that data is used for serious decisions in the very real world, and bad information causes suffering and death).

There are several good examples from recent medical history where a failure to ensure adequate ‘blinding’, as it is called, has resulted in the entire medical profession being mistaken about which was the better treatment. We had no way of knowing whether keyhole surgery was better than open surgery, for example, until a group of surgeons from Sheffield came along and did a very theatrical trial, in which bandages and decorative fake blood squirts were used, to make sure that nobody could tell which type of operation anyone had received.

Some of the biggest figures in evidence-based medicine got together and did a review of blinding in all kinds of trials of medical drugs, and found that trials with inadequate blinding exaggerated the benefits of the treatments being studied by 17 per cent. Blinding is not some obscure piece of nitpicking, idiosyncratic to pedants like me, used to attack alternative therapies.

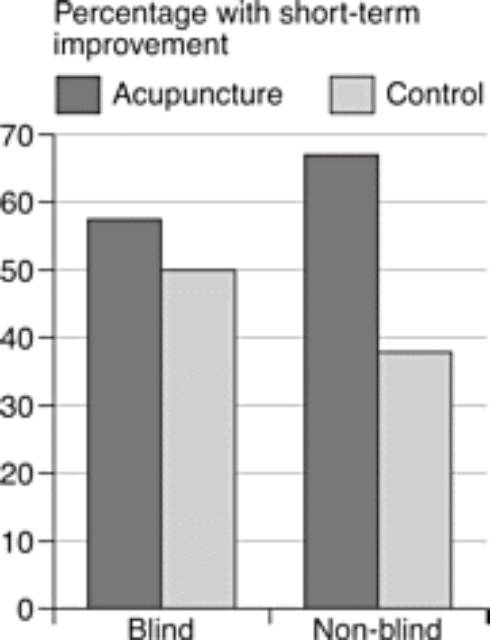

Closer to home for homeopathy, a review of trials of acupuncture for back pain showed that the studies which were properly blinded showed a tiny benefit for acupuncture, which was not ‘statistically significant’ (we’ll come back to what that means later). Meanwhile, the trials which were not blinded – the ones where the patients knew whether they were in the treatment group or not – showed a massive, statistically significant benefit for acupuncture. (The placebo control for acupuncture, in case you’re wondering, is sham acupuncture, with fake needles, or needles in the ‘wrong’ places, although an amusing complication is that sometimes one school of acupuncturists will claim that another school’s sham needle locations are actually their genuine ones.)

So, as we can see, blinding is important, and not every trial is necessarily any good. You can’t just say, ‘Here’s a trial that shows this treatment works,’ because there are good trials, or ‘fair tests’, and there are bad trials. When doctors and scientists say that a study was methodologically flawed and unreliable, it’s not because they’re being mean, or trying to maintain the ‘hegemony’, or to keep the backhanders coming from the pharmaceutical industry: it’s because the study was poorly performed – it costs nothing to blind properly – and simply wasn’t a fair test.

Randomisation

Let’s take this out of the theoretical, and look at some of the trials which homeopaths quote to support their practice. I’ve got a bog-standard review of trials for homeopathic arnica by Professor Edward Ernst in front of me, which we can go through for examples. We should be absolutely clear that the inadequacies here are not unique, I do not imply malice, and I am not being mean. What we are doing is simply what medics and academics do when they appraise evidence.

So, Hildebrandt

Randomisation is not a new idea. It was first proposed in the seventeenth century by John Baptista van Helmont, a Belgian radical who challenged the academics of his day to test their treatments like blood-letting and purging (based on ‘theory’) against his own, which he said were based more on clinical experience: ‘Let us take out of the hospitals, out of the Camps, or from elsewhere, two hundred, or five hundred poor People, that have Fevers, Pleurisies,

It’s rare to find an experimenter so careless that they’ve not randomised the patients at all, even in the world of CAM. But it’s surprisingly common to find trials where the method of randomisation is inadequate: they look plausible at first glance, but on closer examination we can see that the experimenters have simply gone through a kind of theatre, as if they were randomising the patients, but still leaving room for them to influence, consciously or unconsciously, which group each patient goes into.

In some inept trials, in all areas of medicine, patients are ‘randomised’ into the treatment or placebo group by the order in which they are recruited onto the study – the first patient in gets the real treatment, the second gets the placebo, the third the real treatment, the fourth the placebo, and so on. This sounds fair enough, but in fact it’s a glaring hole that opens your trial up to possible systematic bias.

Let’s imagine there is a patient who the homeopath believes to be a no-hoper, a heart-sink patient who’ll never really get better, no matter what treatment he or she gets, and the next place available on the study is for someone going into the ‘homeopathy’ arm of the trial. It’s not inconceivable that the homeopath might just decide – again, consciously or unconsciously – that this particular patient ‘probably wouldn’t really be interested’ in the trial. But if, on the other hand, this no-hoper patient had come into clinic at a time when the next place on the trial was for the placebo group, the recruiting clinician might feel a lot more optimistic about signing them up.

The same goes for all the other inadequate methods of randomisation: by last digit of date of birth, by date seen in clinic, and so on. There are even studies which claim to randomise patients by tossing a coin, but forgive me (and the entire evidence-based medicine community) for worrying that tossing a coin leaves itself just a little bit too open to manipulation. Best of three, and all that. Sorry, I meant best of five. Oh, I didn’t really see that one, it fell on the floor.

There are plenty of genuinely fair methods of randomisation, and although they require a bit of nous, they come at no extra financial cost. The classic is to make people call a special telephone number, to where someone is sitting with a computerised randomisation programme (and the experimenter doesn’t even do that until the patient is fully signed up and committed to the study). This is probably the most popular method amongst meticulous researchers, who are keen to ensure they are doing a ‘fair test’, simply because you’d have to be an out-and-out charlatan to mess it up, and you’d have to work pretty hard at the charlatanry too. We’ll get back to laughing at quacks in a minute, but right now you are learning about one of the most important ideas of modern intellectual history.

Does randomisation matter? As with blinding, people have studied the effect of randomisation in huge reviews of large numbers of trials, and found that the ones with dodgy methods of randomisation overestimate treatment effects by 41 per cent. In reality, the biggest problem with poor-quality trials is not that they’ve used an inadequate method of randomisation, it’s that they don’t tell you

In fact, as a general rule it’s always worth worrying when people don’t give you sufficient details about their methods and results. As it happens (I promise I’ll stop this soon), there have been two landmark studies on whether inadequate information in academic articles is associated with dodgy, overly flattering results, and yes, studies which don’t report their methods fully do overstate the benefits of the treatments, by around 25 per cent. Transparency and detail are everything in science. Hildebrandt

Let’s go back to the eight studies in Ernst’s review article on homeopathic arnica – which we chose pretty