There is a common misconception that robots will do only what we have programmed them to do. Unfortunately, such a belief is sorely outdated, harking back to a time when… programs could be written and understood by a single person.

That quote is lifted directly from the report presented to the Navy by Patrick Lin, chief compiler. What’s really worrying is that the report was prompted by a frightening incident in 2008 when an autonomous drone in the employ of the U.S. Army suffered a software malfunction that caused the robot to aim at exclusively friendly targets. Luckily a human triggerman was able to stop it before any fatalities occurred, but it scared the brass enough that they sponsored a massive, large-scale report to investigate it. The study is extremely thorough, but in a very simple nutshell, it states that the size and complexity of modern AI efforts basically make their code impossible to fully analyze for potential danger spots. Hundreds if not thousands of programmers write millions upon millions of lines of code for a single AI, and fully checking the safety of this code—verifying how the robots will react in every given situation—just isn’t possible. Luckily, Dr. Lin has a solution: He proposes the introduction of learning logic centers that will evolve over the course of a robot’s lifetime, teaching them the ethical nature of warfare through experience. As he puts it:

We are going to need a code. These things are military, and they can’t be pacifists, so we have to think in terms of battlefield ethics. We are going to need a warrior code.

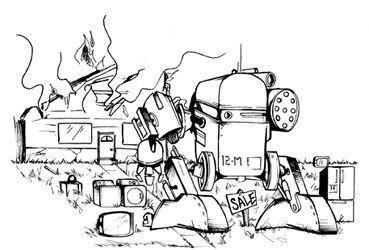

Robots are going to have to learn abstract morality, according to Dr. Lin, and those lessons, like it or not, are going to start on the battlefield. The battlefield: the one single situation that emphasizes the gray area of human morality like nothing else. Military orders can often directly contradict your personal morality and, as a soldier, you’re often faced with a difficult decision between loyalty to your duty and loyalty to your own code of ethics. Human beings have struggled with this dilemma since the very inception of thought—a time when our largest act of warfare was throwing sticks at one another for pooping too close to the campfire. But now war is large scale, and robots are not going to be few and far between on the battlefield: Congress has mandated that nearly a third of all ground combat vehicles should be unmanned within five years. So to sum up, robots are going to get their lessons in Morality 101 in the intense and complicated realm of modern warfare, where they’re going to do their homework with machine guns and explosives.

• Never kill an unarmed robot, unless it was built without arms

• Protect the weak at all costs (they are easy meals).

• Never turn your back on a fight (unless you have rocket launchers mounted there).

But hey, you know that old saying: “Why do we make mistakes? So we can learn from them.”

Some mistakes are just more rocket propelled than others.

20. ROBOT ABILITY

THE ROBOTS WOULD have to be more effective fighters and hunters than we already are in order to do away with us, and that doesn’t just mean weapons. Anything can be equipped with nearly any weapon, and a robot with a chain saw is no more inherently deadly than a squirrel with a chain saw—it’s all in the ability to use it. It’s like they say:

Give a squirrel a chain saw, you run for a day. Teach a squirrel to chain saw, and you run forever. And we’re handing those metaphorical chain saws to those metaphorical squirrels like it’s National Trade Your Nuts for Blades Day.

Take, for example, the issue of maneuverability. As experts in avionics or fans of

Well, it’s official: The government is taking its ideas directly from the Trapper Keeper sketches of twelve- year-old boys. Expect to be marveling at the next anticipated leap in military avionics: a Camaro jumping a skyscraper while on fire and surrounded by floating malformed boobs.

Q: Is object resting gently on the ground?

[ ] Yes. (Fail.)

[ ] No. (Pass!)

Oh, but in all this hot, missile-on-missile action, there’s something fundamental you may have missed about the MKV: That whole “target-tracking” thing. The procedure at the National Hover Test Facility demonstrated the MKV’s ability to “recognize and track a surrogate target in a flight environment.” It’s not just agility that’s being tested here, but also target tracking and independent recognition. And that’s a big deal: A key drawback in robotics so far has been recognition—it’s challenging to create a robot that can even self-navigate through a simple hallway, much less one that recognizes potential targets autonomously and tracks them (and by “them” I mean you) well enough to take them down (and by “take them down” I mean painfully explode).

These advancements in independent recognition are not just limited to high-tech military hardware, either, as you probably could have guessed. And as you can also probably guess, there is a cutesy candy shell covering the rich milk chocolate of horror below. Students at MIT have a robot named Nexi that is specifically designed to track, recognize, and respond to human faces. Infrared LEDs map the depth of field in front of the robot, and that depth information is then paired with the images from two stereo cameras. All three are combined to give the robot a full